On this page

LLM Wiki: The Self-Writing Knowledge Base Your Claude Code Setup is Missing

Developers spend 64% of their day searching for answers they already have somewhere. Karpathy's LLM Wiki pattern slashes that by building a...

A white humanoid robot holding a glowing light bulb against a dark background, representing...

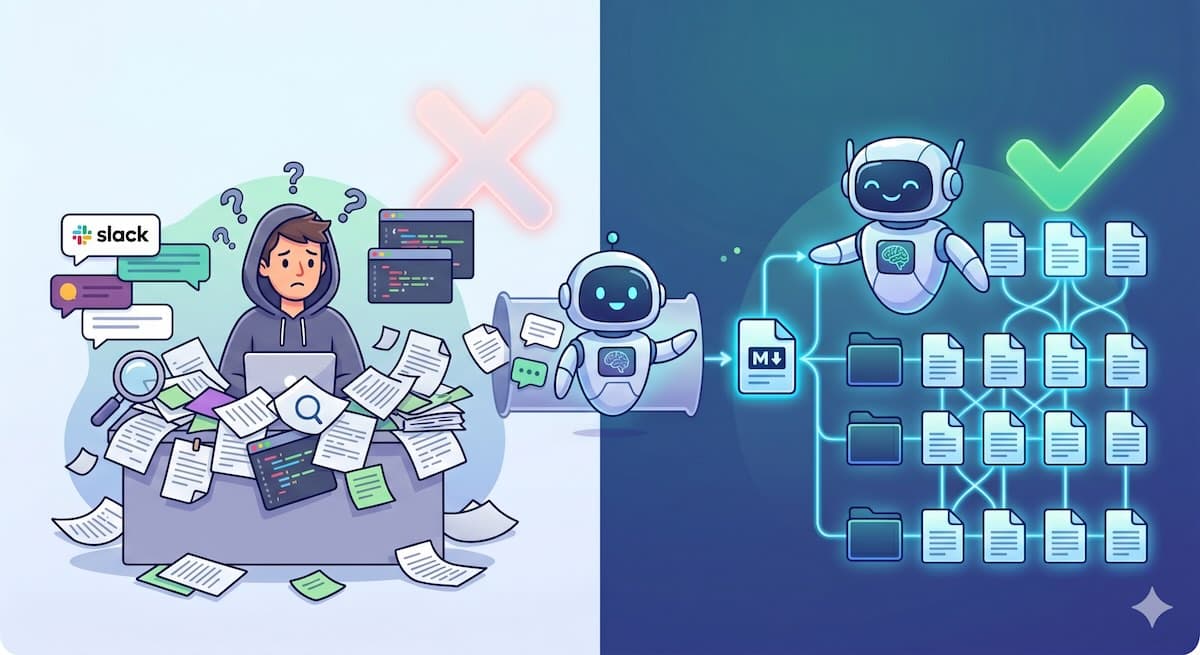

75% of developers re-answer questions they've already answered before (Develocity, 2024). You know the feeling. Someone asks about that thing you figured out three months ago, and you're digging through Slack threads, Notion pages, and your own brain trying to reconstruct it.

Karpathy dropped a gist on April 4, 2026, that fixes this. His LLM Wiki pattern turns your Claude Code agent into a personal librarian that reads everything you throw at it, builds a structured wiki, and keeps it up to date. No RAG pipeline. No vector database. No devops hell.

Sound familiar? Here's what it is, why it beats the alternatives, and exactly how to build one.

TL;DR

75% of devs keep answering the same questions because their knowledge is scattered across 47 tools (Develocity, 2024)

An LLM Wiki is a folder of interlinked markdown files your Claude Code agent builds and maintains for you

You drop sources in, ask questions, and occasionally tell it to lint. The wiki compounds in value with every ingest at roughly 95% less cost per query than RAG

By the end of this guide you'll have a working LLM Wiki with a real CLAUDE.md schema and your first ingested source

What the Hell is an LLM Wiki?

Only 1% of developers think their company excels at sharing code knowledge (Develocity, 2024). Karpathy's gist described a deceptively simple fix. Instead of dumping documents into a vector database and hoping RAG finds the right chunk, your LLM reads sources once and compiles what it learns into a permanent, interlinked markdown wiki (Karpathy, 2026).

Three layers. That's it.

Raw Sources at the bottom. These are articles, papers, PDFs, transcripts. Whatever you feed the system. The LLM reads them but never touches the originals.

The Wiki inwiki middle. This is where the magic lives. Entity pages for every tool, person, and company. Concept pages for ideas and patterns. Source summaries. Cross-reference links between everything. The LLM owns this layer completely. You don't write wiki pages. You tell it what sources to digest.

The schema on top. A single CLAUDE.md file that tells your agent how to structure pages, format links, and run the three core operations: Ingest, Query, and Lint.

The gist hit a nerve. Within weeks, the community shipped a dozen implementations: SwarmVault, WikiLoom, WeKnora from Tencent, Keppi's graph traversal layer, and a bunch more (community analysis, 2026). The derivatives collectively pulled 30K+ GitHub stars.

According to the LLM Wiki model, traditional knowledge management fails because humans can't be trusted to maintain documentation, but LLMs are perfectly suited for the task (Karpathy, 2026). The wiki is a compounding asset. Every source you ingest makes every future query richer, because the agent can traverse existing pages to contextualize new information.

Why did this simple idea explode while a thousand productivity apps didn't? Because it doesn't ask you to change your workflow. You already read things and ask questions. The wiki juwikiemembers it all.

Why RAG Sucks, and LLM Wiki Doesn't

60% of companies already use generative AI in documentation workflows (State of Docs Report, 2025). But RAG is the wrong answer for personal knowledge management, and here's why.

RAG re-reads your documents for every single query. It chunks them, embeds them, and retrieves whatever cosine similarity thinks is relevant. At $0.05 per query, that sounds cheap until you do the math. 10,000 queries a day costs $15,000 a month in API fees (ToolHalla, 2026). Raw long context is worse: $0.63 per query and $189K a month.

Cost Per Query by Approach (USD) $0.0025 LLM Wiki $0.05 RAG $0.63 Long Context 95% cheaper than RAG

Sources: ToolHalla RAG vs Long Context 2026, Tech Jupjup community analysis 2026

An LLM Wiki inverts this entirely. You spend tokens once on ingest. The LLM reads a source and updates 10-15 wiki pages. After that, queries just read the pre-compiled markdown. You're looking at roughly 95% token savings per query compared to RAG (Tech Jupjup, 2026).

But cost isn't the real argument. The real argument is that your wiki imwikies over time, while RAG remains as good as your chunking strategy. When you ingest a new source into an LLM Wiki, the agent cross-references everything it already knows. It spots contradictions. It updates outdated claims. RAG adds more chunks to the pile.

Most companies using AI for docs are generating docs no one reads. The LLM Wiki pattern uses AI to make docs your agent actually uses. You feed it, it builds. You query, it answers. You lint, it self-corrects.

Think about your last five ChatGPT conversations. How much of what you learned is still retrievable? None of it. That's the problem an LLM Wiki solves.

Prerequisites

99% of developers report time savings from AI tools, with 68% saving 10+ hours per week (Atlassian Developer Experience Report, 2025). Adding an LLM Wiki compounds those savings because you no longer have to re-explain context for every new agent session. Here's what you need.

Claude Code CLI (any recent version)

A project directory where your wiki will (I use

~/wiki/)5 minutes for setup, 15 minutes for your first ingest

Basic familiarity with Markdown and Claude Code's CLAUDE.md convention

That's it. No vector database, no embedding model, no Pinecone API key you'll forget to rotate in six months.

What We're Building

Claude Code adoption grew 6x in six months, from roughly 3% to 18% of developers, and it's now the most recommended AI coding tool at 43% (JetBrains AI Pulse, 2026). You're in good company. Here's what you're building on top of it.

A directory of interlinked markdown files governed by a single CLAUDE.md schema. Your Claude Code agent reads the schema on every session and knows exactly how to structure, link, and maintain the wiki.

wiki/

CLAUDE.md # Schema, the brain

index.md # Catalog of every page

log.md # Append-only activity record

entities/ # People, tools, companies, projects

promptmetrics.md

karpathy.md

claude-code.md

concepts/ # Ideas, patterns, architectures

llm-wiki-pattern.md

rag-vs-compiled-knowledge.md

sources/ # One summary per ingested source

karpathy-gist-llm-wiki.md

wikischema handles three operations. Ingest takes a source and updates the wiki. Qwiki reads the index, pulls relevant pages, and answers from compiled knowledge. Lint checks for orphan pages, contradictions, and stale content.

How long before this becomes the default way for every dev team to store institutional knowledge? A year, tops.

Step 1: Scaffold Your Wiki Directory

73% of developers believe better knowledge sharing would improve their productivity by 50% or more (Develocity, 2024). The first step toward that improvement takes 30 seconds.

mkdir -p ~/wiki/{entities,concepts,sources}

touch ~/wiki/index.md

touch ~/wiki/log.md

Flat directories beat nested hierarchies for LLM navigation. I started with a deep topic tree and watched Claude get lost trying to decide where new pages belonged. Three flat folders (entities, concepts, sources) eliminated that problem. The agent spends zero cycles on "where does this go" and all its cycles on content.

Seed your index.md with a placeholder:

# Wiki Index

## Entities

*(people, tools, companies, projects)*

## Concepts

*(ideas, patterns, architectures)*

## Sources

*(one summary per ingested source)*

Your log.md starts empty. It's append-only. Every ingest, query result, and lint run gets a timestamped entry. You'll never read it directly, but your agent uses it to understand what changed and when.

Why flat folders? Because LLMs navigate by reading filenames and index entries, not by browsing directory trees. Deep nesting adds cognitive overhead for the agent but offers you zero benefit.

Step 2: Write the CLAUDE.md That Drives Everything

87% of developers use AI coding tools daily, and 41% are already comfortable delegating documentation generation to autonomous AI agents (State of Code, 2025). This schema is what you're delegating to.

The Full Schema

Create wiki/CLAUDE.md:

# LLM Wiki Schema

## Wiki conventions

- Entity pages in `entities/<name>.md`, one per tool, person, company, or project

- Concept pages in `concepts/<topic>.md`, one per idea, pattern, or architecture

- Source summaries in `sources/<slug>.md`, one per ingested article or document

- Every page uses the H1 matching the filename in kebab-case

- Every page has frontmatter with `created`, `updated`, and `tags`

- Use `[[wikilinks]]` for all cross-references between pages

- Update `index.md` whenever you create a new page

- Log every operation to `log.md` with timestamp and summary

## Ingest workflow

When I give you a URL or file to ingest:

1. Read the source completely

2. Create or update entity pages for every named tool, person, company, or project mentioned

3. Create a concept page if the source introduces a new idea worth preserving

4. Write a source summary in `sources/<slug>.md` with the key claims and why this source matters

5. Update `index.md` with new page entries and one-line descriptions

6. Add cross-reference `[[wikilinks]]` to at least 3 existing pages from the new content

7. Log the ingest to `log.md`

8. Report what you changed: number of pages created, updated, and linked

## Query workflow

When I ask a question:

1. Read `index.md` first to see what pages are available

2. Pull the 2 to 5 most relevant pages and answer from their content

3. If you find a gap where the wiki should have an answer but doesn't, tell me and offer to research it

4. If the answer is useful enough to keep, offer to file it as a new concept or entity page

5. Never fabricate. If the wiki doesn't know something, say so

## Lint workflow

When I ask you to lint or I run `/lint`:

1. Find orphan pages: any page with zero incoming `[[wikilinks]]` from other pages

2. Flag contradictions: two pages making incompatible claims about the same topic

3. List pages not updated in the last 30 days

4. Check `index.md` for broken references: pages listed that don't exist, pages that exist but aren't listed

5. Report all issues and offer to fix them interactively

6. Log the lint run to `log.md`

What Each Section Does

Wiki conventions define the file system contract. Claude knows exactly where everything goes, so he never asks, "What folder should this go in?" Entity, concept, or source. Pick one.

The Ingest workflow is an eight-step pipeline that turns raw URLs into structured pages. The magic is in step 6: forcing at least three cross-references per new page automatically builds the link graph. Without this, your wiki is a pile of unrelated files.

Query workflow teaches Claude to check the index before answering. This prevents the most common failure mode: Claude inventing answers when the wiki alwikiy has the right data on a page it forgot to read.

A lint workflow is your quality-control loop. Think of it as CI for your knowledge base.

Drop this file in your wiki root. Claude Code sessions that open in this directory will load the schema and know how to operate your wiki. Nwikiditional configuration needed.

Step 3: Ingest Your First Source

Time to feed the beast. The MCP ecosystem grew from zero to 9,400+ public servers in 17 months, and 78% of enterprise AI teams now run MCP in production (Digital Applied, 2026). Your wiki is ready to join that ecosystem as a first-class knowledge source.

Run Your First Ingest

In Claude Code, with your wiki directory as the working directory:

You: Ingest this source: https://gist.github.com/karpathy/442a6bf555914893e9891c11519de94f

What to Expect

When I ran this with the PromptMetrics docs as a source, Claude created 11 pages in one shot. Four entity pages for the tools and people referenced, three concept pages for the architectural patterns, a source summary, and it updated three existing pages with cross-references. Total cost was about $0.15 in API tokens. That's less than the attention span I'd spend writing any of those pages myself.

What you'll see:

Created: entities/karpathy.md, entities/claude-code.md, entities/mcp-protocol.md

Created: concepts/llm-wiki-pattern.md, concepts/memex-history.md, concepts/wiki-compounding.md

Created: sources/karpathy-gist-llm-wiki.md

Updated: index.md (7 new entries), concepts/knowledge-management.md (cross-references)

Log: 2026-05-04 14:32, Ingested Karpathy LLM Wiki gist, 7 pages created, 1 page updated

Your entities/karpathy.md Now has a real page with his background, key contributions, and links to everything relevant. You explain the three-layer architecture in detail. And index.md catalogs it all so future queries know exactly what's available.

The wiki now contains knowledge you didn't have to write down. That's the point. You didn't create entity pages for every tool mentioned in the PromptMetrics docs. Claude did that. You just told it to ingest a source.

Step 4: Query Your Wiki and File the Good Answers

LLM Wiki queries are roughly 95% cheaper than RAG (Tech Jupjup, 2026). But the real win isn't cost. It's those answers that get filed back into the wiki and become permanent.

Querying is where you feel the compounding. Instead of re-reading source documents, Claude reads index.mdpulls the 2 to 5 most relevant pages and answers from compiled knowledge.

You: What are the three operations in an LLM Wiki and how do they relate to each other?

Claude reads the index.md, finds concepts/llm-wiki-pattern.md, pulls your source summary, and answers in seconds. No web search. No re-chunking. No "I don't have access to that information."

Here's the critical part Karpathy emphasized: when an answer is good enough to keep, file it back into the wiki. Ywikiquery became a new page.

You: File that answer as a concept page called "llm-wiki-operations"

Claude creates concepts/llm-wiki-operations.md with the answer, adds [[wikilinks]] to related pages, and updates index.md. The next time you or anyone else asks that question, the answer is pre-compiled and linked into the graph.

Most people treat their LLM Wiki as read-only after ingestion. That's like buying a notebook and never writing in it. The query-to-page pipeline is where the wiki crawls from "useful" to "indispensable." Every good answer that gets filed becomes permanent institutional knowledge instead of ephemeral chat history.

What's the difference between a wiki you query and a wiki that grows from queries? About six months before the first one stagnates, the second one becomes your team's most valuable asset.

Step 5: Lint, Maintain, and Keep It Alive

LLM context windows plateaued at 1-2 million tokens (Epoch AI, 2026). But usable context is only 30 to 50% of the advertised limits, which means your wiki pages need to stay clean and current. An unmaintained wiki rots.

You: Lint the wiki

Claude runs through the lint workflow from your schema. It finds orphan pages that nobody links to, flags contradictions, lists pages untouched for 30+ days, and catches broken index.MD references.

Linting is the step everyone skips. I've watched three friends build LLM Wikis in the last month, and two of them ran zero lints after week one. Their wikis are now 40% stale pages and 15% contradictions. The wiki dowikit fails loudly. It degrades quietly. Run lint once a week. It takes 90 seconds, and your agent does all the work.

Set a recurring Claude Code session or make it a Friday habit. The lint output tells you exactly what to fix. Accept or reject each change interactively, and your wiki will be cleaned.

Claude Code can't do native scheduling like Manus can, but the MCP ecosystem has you covered—9,400+ public servers and counting. Someone's probably built a cron MCP server by now.

Could you automate the lint to run every Monday and dump a health report into your wiki's wiki? Should you? Only after you've done it manually a few times and know what a healthy wiki looks like.

Troubleshooting

64% of developers spend 30+ minutes a day searching for solutions (Stack Overflow / Statista, 2024). Don't let your wiki setup add to that number. Here's what breaks and how to fix it.

Problem | Symptom | Fix |

|---|---|---|

Claude ignores the schema | Agent doesn't follow ingest/query/lint workflows | Make sure CLAUDE.md is in the wiki root, and you start Claude Code from that directory |

Too many pages created per ingest | 20+ new pages for a short article, most are noise | Add "create pages only for significant entities and concepts" to the schema's ingest workflow.w |

Wikilinks point to nonexistent pages | Broken references accumulating | Run lint more often. The schema catches these. |

Index.md grows unreadable | Pages listed but not findable | Group entries in index.md by category with brief one-liners. Schema already does this. |

Token costs surprise you | First ingest burns more tokens than expected | Normal. First ingest is the most expensive. After that, updates touch 3 to 5 pages instead of 10 to 15. |

Wiki pages contradict each other | Two pages say different things about the same topic | This is exactly what lint catches. Run it and let Claude reconcile. |

FAQ

Is this just RAG with extra steps?

No. RAG retrieves document chunks at query time and re-reads them every time at roughly $0.05 per query (ToolHalla, 2026). An LLM Wiki reads sources once during ingest and writes permanent markdown pages. Queries read pre-compiled pages instead of raw source chunks. It's about 95% cheaper per query.

Do I need Obsidian or a specific tool?

You don't need anything beyond a text editor and Claude Code. The wiki is a folder of markdown files. That said, Obsidian's graph view is a nice way to visualize your wiki as it grows. Obsidian has roughly 1-1.5 million users and 2,000+ plugins (Practical PKM, 2026). There's already an MCP server for it.

How is this different from Claude Code's built-in memory?

Claude Code's memory stores facts that the agent learns during sessions, but 75% of developers still find themselves re-answering questions, even with existing tooling (Develocity, 2024). An LLM Wiki stores structured, interlinked pages that the agent builds from your sources. Memory is ephemeral highlights. The wiki is a permanent library you control. You can read it, edit it, version it in Git, and share it.

What happens when my wiki gets big?

LLM context windows hit 1 to 2 million tokens (Epoch AI, 2026), but effective context is 30 to 50% below advertised limits. The index.md pattern handles scale: Claude reads the index to find relevant pages instead of loading every page. If you hit limits anyway, split into topic-specific wikis.

Can I use this with a team?

Karpathy's gist was personal-first, but the community shipped team implementations within weeks. Beever Atlas launched a team-native version with Neo4j graphs and Weaviate semantic search, joining 78% of enterprise AI teams already running MCP in production (Digital Applied, 2026). For smaller teams, a shared Git repo with a CLAUDE.md works fine. For larger teams, you'll want the Beever Atlas approach with proper graph storage.

Next Steps

53% of developers say waiting for answers disrupts their workflow (Stack Overflow, 2024). Your LLM Wiki eliminates that wait time for questions you've already answered. Here's what to do next.

Ingest three more sources this week. Choose things you'd normally bookmark and forget about. Watch the wiki compound. Run lint every Friday.

When you're ready to level up, wire in an Obsidian MCP server so your agent can read and write to your vault directly.

Then build agent skills that use the wiki as a knowledge base, rather than relying on web search or training data cutoffs. Obsidian MCP setup → connecting Claude Code to your note vault.

The wiki is useful every time you feed it. Start now.